A short guide to understanding geospatial metrics

It has been a good interview. The candidate shows knowledge about data and geospatial and the examples that she has given depict good behaviors and reactions, you got a good feeling about her and now she is hitting you with very good questions. Then comes the last one. And it is an old acquaintance:

What did you like most about your job?

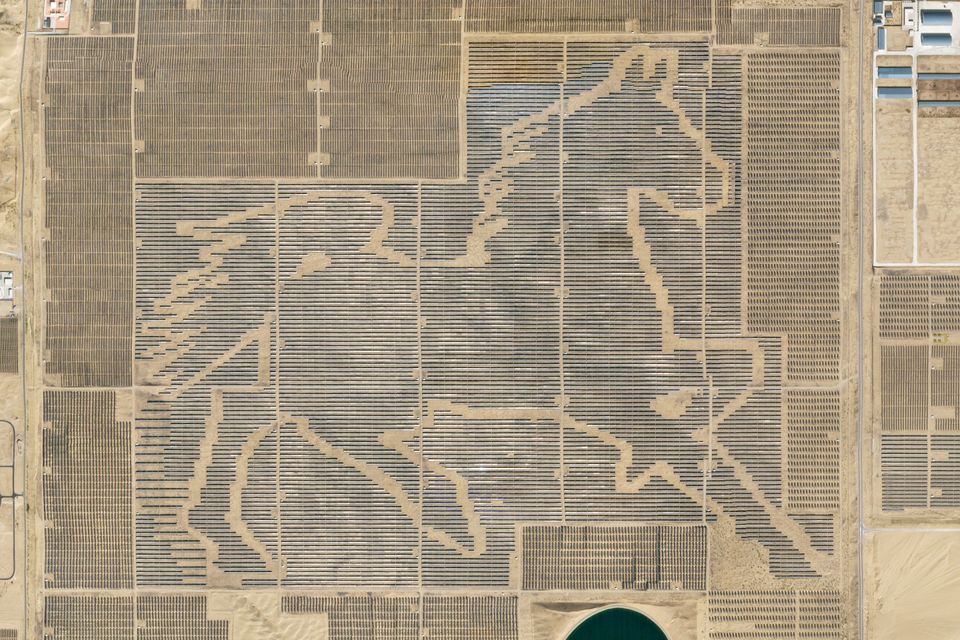

You are happy that she has given you this opportunity, served on a silver platter, to explain why working in data in an Earth Observation company is quite different than working in a data team in other sectors. So you begin telling her that in your industry, a row on a table represents an image taken by a satellite. An image that captures a single moment in a certain place.

I have written place and not location because they have two distinct meanings. Location is the geometric position on the surface of the Earth. The place is the human construct embedded with a lot of connotations. In that single snapshot, there are many untold stories happening at the same time. People doing their business, forest burning, rivers flowing, animals feeding…

It does not matter if you work as a data engineer, analytics engineer, data analyst, or data scientist, being able to trace back an anomaly, a data bug or even a customer complaint to a single screenshot of the Earth is quite something. It grounds you, gives you context, and eventually helps you to understand the nature of the problem.

📗 The Story of an Image

The data behind the pixels

We know that data points are very high-level abstractions to events or objects, and metrics are aggregated calculations and trends of these data points. Geospatial operational data teams such as mine at Planet are trying to improve the performance of our organizations in time and space using metrics generated by satellite imagery collection and customer order fulfillment.

When trying to understand the data flow in our system we do not usually work directly with pixels -the image itself-, we work with the metadata associated with that image. The image metadata tells the story behind the process of getting that image. Examples of image metadata could contain answers to the following questions:

- Where and when the image is being taken?

- What is its shape?

- What is the percentage of cloud cover?

- Who is the customer requesting that image?

- When it was requested?

All these extra pieces of useful information are attached to the image metadata while the image "flows" to the capture funnel. Timestamps, geometries, percentages... all are being stored to be transformed into metrics.

In the following section, I will try to cover the main ones.

🔱 The triumvirate of geospatial metrics

Three key metrics to monitor satellite collection to image and map the World

🌐 Coverage

When you are trying to take a selfie with your smartphone, usually you try to capture your whole face. You do not want to miss your left ear. The same happens with a satellite. Coverage could be measured as the percentage of an area of interest that is being covered by the image.

coverage = collected_geometry * 100 / requested_geometry

Some of the usual troubles that cause an area not to be completely covered by a satellite image could be:

- sensor problems

- bad tasking instructions

- gaps or missing data due to downlinking or processing issues

- georeferencing problems

Something to be aware of also is the possibility of overlapping images in very closed times of interest. This is a waste of resources.

What happens when your target is the whole planet? You can use a global landmass geometry but this approach will give you a very low resolution. It will give you a fast and easy coverage overview, but with such small granularity, you would miss when things could be wrong if the coverage is less than your target threshold. Another similar approach would be using a world borders (or any custom world division) set of geometries.

An elegant solution is implementing a global grid cell system such as S2 or H3. Because of their hierarchical structure, you could select the level of resolution based on your analysis needs or customers' requirements.

Example of SQL query to calculate the percentage of coverage of a given area of interest:

SELECT

round(ST_Intersection(collected_geometry, requested_geometry)*100/ST_Area(requested_geometry),2) as coverage_rate

FROM

satellite.images

ST_* stands for "Spatial Type" functions. Although they were originally referenced as "Spatial-Temporal" data, which I like most. Most of these functions can be found in modern cloud relational databases and are kind of duplicates of PostGIS ones. I highly recommend watching Zachary Deziel's talk about transforming geospatial data using PostGIS and dbt.

⏱️ Latency

The second key metric is latency, that is, the time difference -usually measured in hours- between the acquisition of an image and its publication when it is ready to be consumed by the final user or customer.

latency = published_time - collected_time

This metric depends on how fast the image is downlinked to the ground station, processed, and exposed in a user interface, cloud bucket, or data API. The faster the image flows from the satellite to the customer, the lower the latency, the better.

In Earth Observation, images could be delivered in different data processing states or levels. The higher the level, the more time the image needs to be in the pipeline, and as a result more derived information, analysis and variables could be attached to the final product. But of course, the latency would increase.

Some anomalies or problems that could transform into latency peaks could be:

- antenna's outages

- reprocessing events

- processing bottlenecks (especially if there is any manual task)

A good practice is to monitor latency in every stage of this process. In doing so, you could pinpoint failures more easily and react with fixes much faster.

Example of SQL query to obtain the average image latency by date:

SELECT

date(published_time) as date,

round(avg(time_diff(published_time, collected_time, HOUR)),2) as latency_hours

FROM

satellite.images

GROUP BY date

ORDER BY date ASC

✅ Fulfillment

The third and final metric is fulfillment. In a nutshell, fulfillment is counting if a customer order is being completed. This figure is usually measured as a ratio against the rest of the orders that are not being completed (or "in progress", but let's keep it simple) within a certain period of time. As with latency, it is a metric that data professionals working in industries like delivery services would be very familiar with.

fulfillment = fulfilled_orders * 100 / finalized_orders

An order is fulfilled when an image fulfills the customer requirements such as latency is less than a custom threshold, the image covers the whole area of interest, the cloud coverage of the image is below a custom threshold and no clouds are hiding the area of interest, there is no image quality issue and the image is published and accessible.

Logically, the higher the fulfillment, the better. The usual suspects that could lower this percentage would be:

- being located in a cloudy region (i.e. the tropics)

- a combination of big areas of interest and/or short time of interest

- software or hardware failures

- customer cancellations

Example of SQL to obtain the fulfillment rate within a time of interest:

SELECT

date,

sum(if(state = 'FULFILLED', 1, 0))*100/count(id) as fulfillment_rate

FROM

satellite.orders

WHERE

state != 'IN_PROGRESS'

GROUP BY date

ORDER BY date ASC

Where date is a calculated field that equals the moment of completion (i.e. fulfilled_time and expiration_time for fulfilled and expired orders respectively).

Going back to the interview, I could explain how our geospatial metrics could detect a trend of decreasing fulfillment. This would trigger an internal investigation. We check that the latency average is in good shape, but coverage is very low. A manual inspection of some of the most poorly covered reveals that there are gaps in some of the images. Before any customer complaints, we share our findings with our software engineering and space ops team to take care of the problem. Mission accomplished, the candidate smiles and you finish the interview with very good vibes.

If you want to know more about the intersection between geography and technology, you can subscribe to my newsletter (in Spanish) or connect with me via LinkedIn.